AI automation has evolved far beyond basic chatbots and simple task triggers. Today, businesses are building production-grade LLM applications and AI agents that connect to real systems, process data, and execute workflows automatically. Tools like n8n, modern APIs, and large language models (LLMs) make this possible—but only when used with the right architecture.

This guide explains how AI automation works in practice, how LLM apps and AI agents are built using n8n and APIs, and why orchestration, monitoring, and control matter more than prompts alone.

What Is AI Automation?

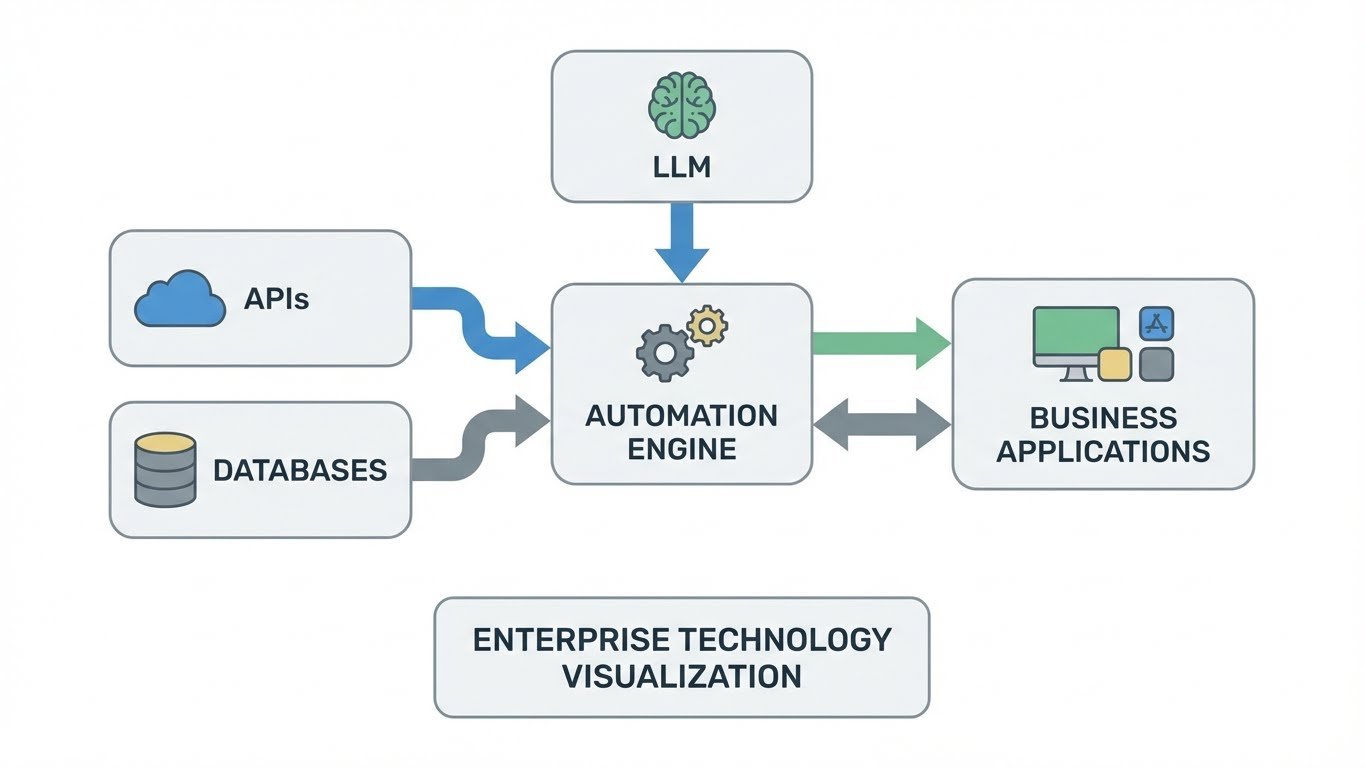

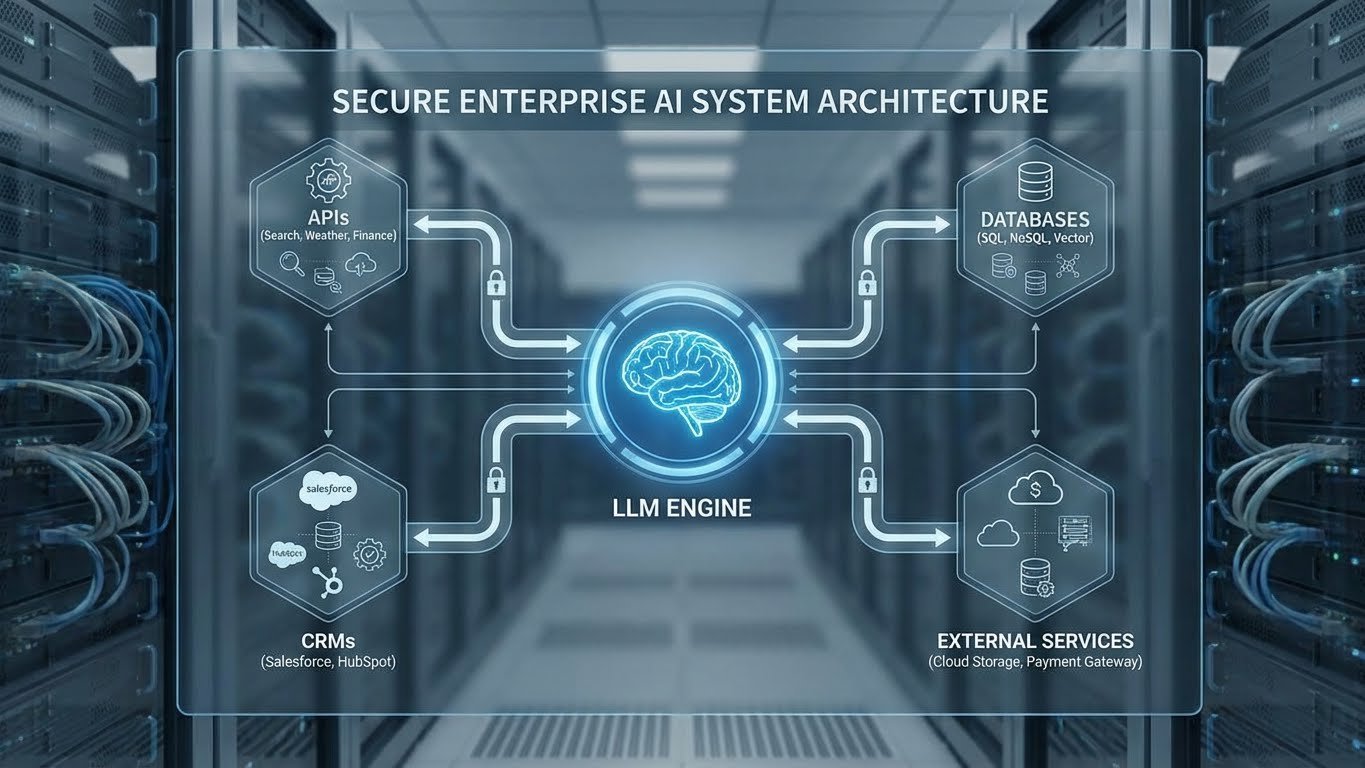

AI automation refers to intelligent workflows where AI systems don’t just respond—they reason, decide, and act. Unlike traditional automation, AI automation integrates LLMs into structured processes that interact with APIs, databases, CRMs, and third-party tools.

AI automation refers to intelligent workflows where AI systems don’t just respond—they reason, decide, and act. Unlike traditional automation, AI automation integrates LLMs into structured processes that interact with APIs, databases, CRMs, and third-party tools.

The goal is not full autonomy, but controlled intelligence—where AI enhances speed and consistency while remaining observable, auditable, and aligned with business goals.

LLM Apps vs AI Agents

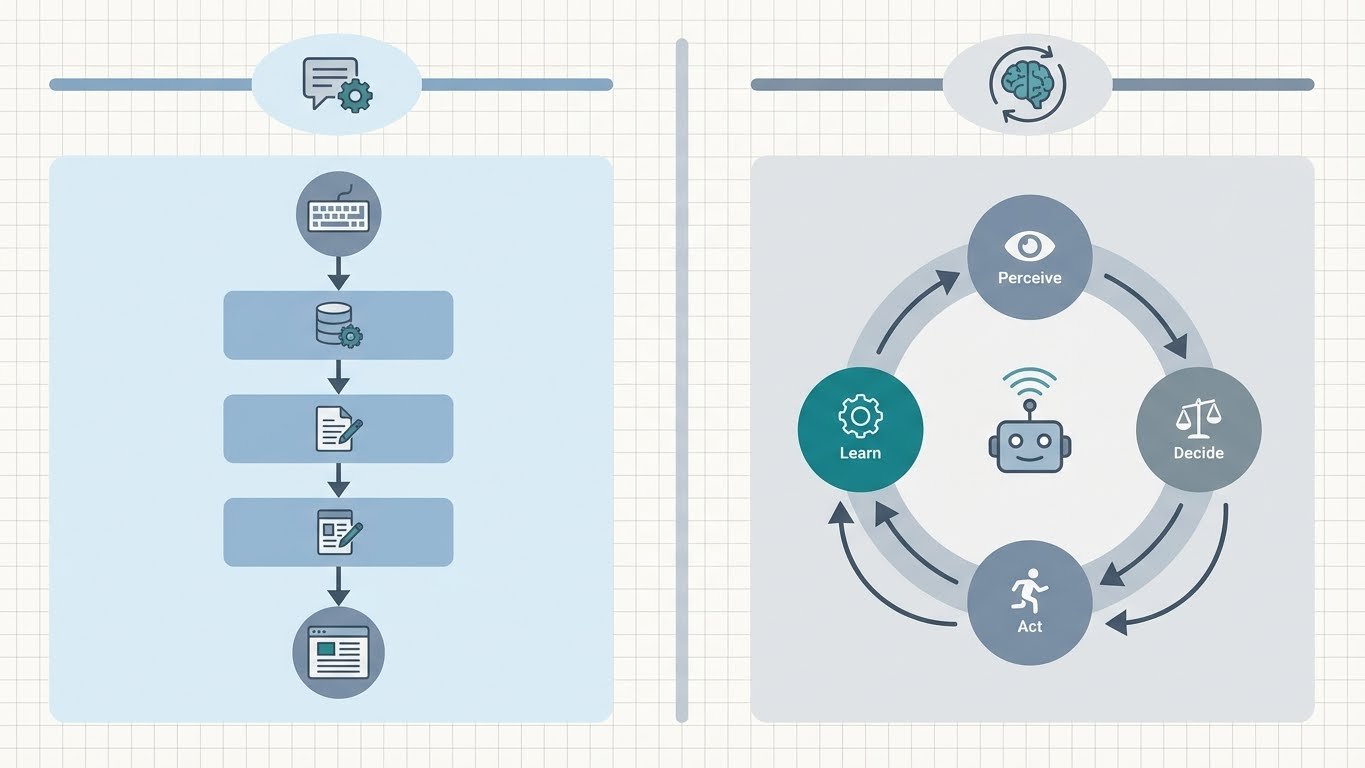

LLM apps are structured applications that embed a language model into a defined workflow. Common examples include document analysis tools, AI-powered customer support systems, and content generation pipelines.

LLM apps are structured applications that embed a language model into a defined workflow. Common examples include document analysis tools, AI-powered customer support systems, and content generation pipelines.

AI agents go a step further. They can execute multi-step tasks, call multiple APIs, maintain context, and decide what action to take next. In real-world systems, most implementations use a hybrid model—LLM apps orchestrated with agent-like decision logic.

Why n8n Is Popular for AI Automation

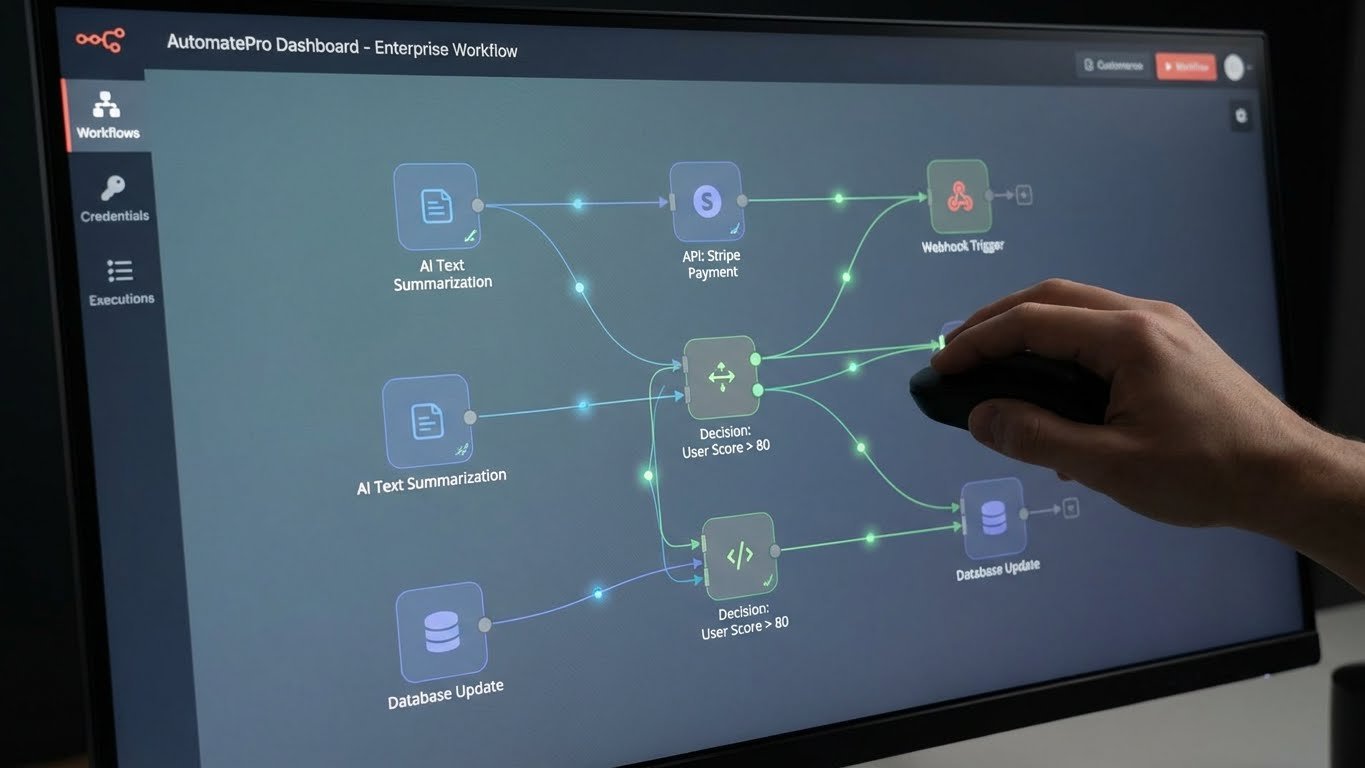

n8n has become a popular automation platform because it allows teams to visually orchestrate workflows while integrating APIs, databases, and AI models. When paired with LLMs, n8n acts as the control layer that governs execution, retries, validation, and error handling.

n8n has become a popular automation platform because it allows teams to visually orchestrate workflows while integrating APIs, databases, and AI models. When paired with LLMs, n8n acts as the control layer that governs execution, retries, validation, and error handling.

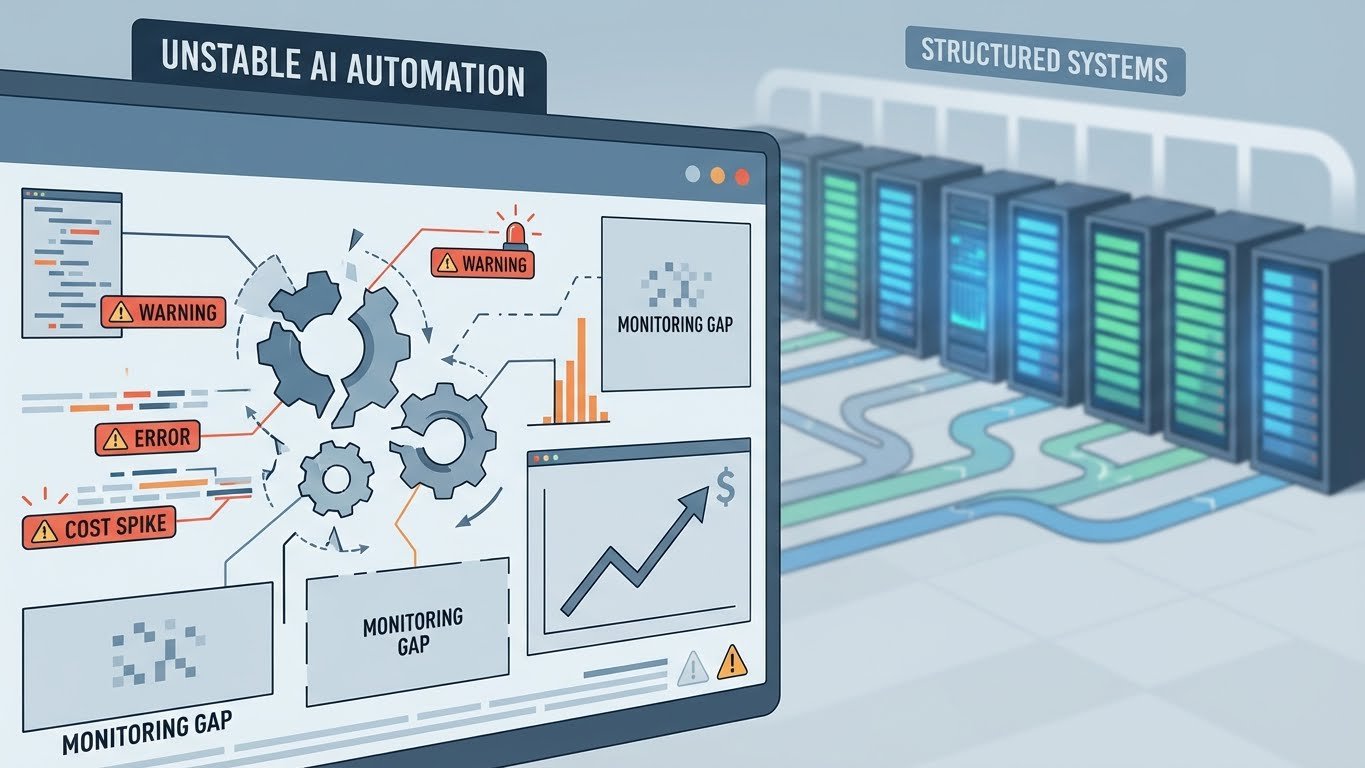

However, n8n alone is not a complete solution. Without proper design, AI workflows can become brittle, expensive, and difficult to monitor.

The Role of APIs in AI Automation

APIs form the backbone of production AI systems. LLMs rely on APIs to fetch real-time data, update systems, trigger actions, and integrate with existing software. Secure authentication, rate limiting, and data validation are essential to avoid failures and unexpected costs.

APIs form the backbone of production AI systems. LLMs rely on APIs to fetch real-time data, update systems, trigger actions, and integrate with existing software. Secure authentication, rate limiting, and data validation are essential to avoid failures and unexpected costs.

Well-designed AI automation systems ensure AI outputs are validated before triggering downstream actions, reducing risk and maintaining reliability.

Common Mistakes in AI Automation Projects

Many teams can build AI automation demos quickly but struggle in production. Common issues include lack of monitoring, uncontrolled model usage costs, poor error handling, and AI agents acting without safeguards.

Many teams can build AI automation demos quickly but struggle in production. Common issues include lack of monitoring, uncontrolled model usage costs, poor error handling, and AI agents acting without safeguards.

Production-grade AI automation requires governance, observability, and human-in-the-loop checkpoints—not just functional prompts.

How NexusDevStudio Delivers Production-Grade AI Automation

At NexusDevStudio, we specialize in building reliable AI automation systems using LLMs, n8n, and secure API integrations. We focus on production readiness—not demos or prompt hacks.

At NexusDevStudio, we specialize in building reliable AI automation systems using LLMs, n8n, and secure API integrations. We focus on production readiness—not demos or prompt hacks.

Our AI Automation services include LLM app development, AI agent orchestration, workflow design, monitoring, and scalable deployment. Learn more about our AI Automation services here: NexusDevStudio

Final Thoughts

AI automation with LLMs, n8n, and APIs can deliver massive efficiency gains when built correctly. The difference between a short-lived experiment and a durable system lies in orchestration, control, and execution.

With the right architecture, AI automation becomes a long-term competitive advantage—not a risky experiment.